CityU Scholar wins Google award for researching on voice-based text composition

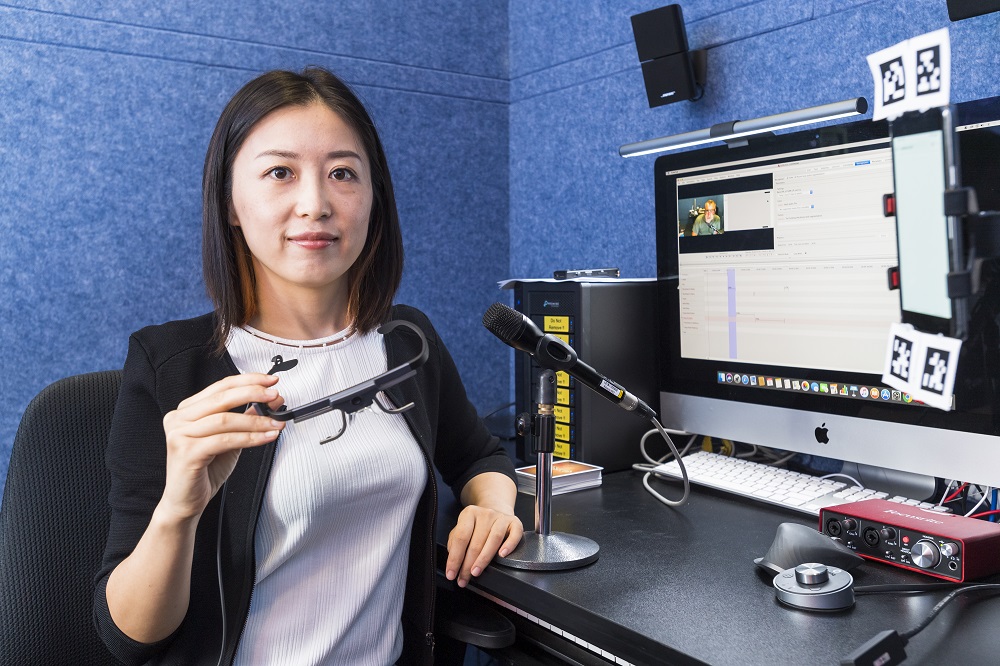

To compose and edit text mainly by speech input without a keyboard is still challenging nowadays. To address this problem, Dr Liu Can, Assistant Professor in the School of Creative Media (SCM) at City University of Hong Kong (CityU) is delving into the development of voice interaction for text composition. She has received an international award from Google Research in recognition of her promising work in this area.

“Human-computer interaction is an interesting field, and the related technologies have changed people’s way of living,” described Dr Liu, who is a specialist in this area. She is the only Hong Kong scholar to receive a Google Faculty Research Award for 2019/20. Her proposal was titled “Voice-based Text Composition with Minimal Visual Aids”, and the award comes under research into human-computer interaction. This year’s Google Research round was highly competitive. Only about 15% of proposals were funded after an extensive review involving 1,100 expert reviewers across Google.

Text editing without a keyboard

Dr Liu has been working on the development of voice interaction for text composition since 2018. In her award-winning proposal, she pointed out that recent breakthroughs in deep learning and natural language processing have dramatically improved the accuracy of speech recognition. Nowadays, voice typing is commonly available on smartphones with decent speech recognition accuracy. However, using voice for text composition hits its bottleneck when there is a need to review and correct the transcribed text.

“It is our habit to edit text contents with keyboards. But when you are walking, cooking or driving, typing with a keyboard is very unpractical. In such situations, it could be convenient to use voice to take quick notes or compose messages,” explained Dr Liu.

Dr Liu’s award-winning research explores to develop new voice-based graphical interfaces to support a smooth transition between visual and auditory modalities with minimal visual aids, so that users are not required to focus on the screen all the time.

Dr Liu noted that only prescribed editing commands could be executed in the existing systems, for example, to add certain words before an exact word or delete exactly which part of the contents. This required the users to remember the exact wordings of their audio input; however, according to Dr Liu’s observation, users usually could remember only the meaning of their audio input, not word for word. Moreover, the system would need to be able to distinguish if the audio input is actually the text contents or commands to edit the text. As a result, designing a new system is challenging.

Beneficial to the visually impaired community

Dr Liu looks for new interaction solutions using machine learning and natural language processing technologies. Her goal is to design a smooth text composition system that takes advantages of using natural speech, and reduce the need for people to occupy their hands and stare at a screen. “The research can shed new light on the further development of the voice interaction paradigm. It could also benefit the visually impaired community by demanding minimal visual attention,” concluded Dr Liu.

Established in 2005, the Google Faculty Research Awards Program aims to recognise and support world-class research in computer science, engineering and related fields performed at academic institutions around the world. The awards encourage world-class faculty collaboration in pursuing impactful research to the community.